- Home

- About

- Contact

- Blog

- How to convert text file to pdf free ap

- Star wars galactic battlegrounds for mac

- 2013 macbook pro quad core 8gb ram for sale

- Free download erwin data modeler

- Suze orman 9 steps to financial freedom free download

- Diablo 2 full version download

- Good kid maad city download audiocastle

- How to write a makefile for multiple files

- Best video maker without watermark for mac

- Digipro wp5540 5-5 x4 usb tablet

|

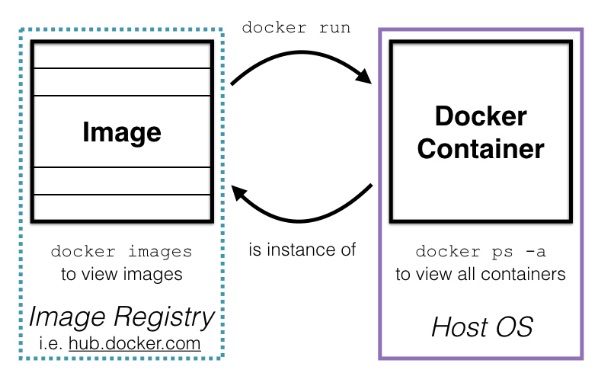

Rsync will only sync changes after an initial sync. Now we need to sync our share directory and sync any changes again as soon as anything changes. Start the container and map the rsync server port to a specific host port. Build the container within the repository directory. RUN cp /root/nf /etc/nfĬMD /etc/rc.d/init.d/xinetd start & tail -f /dev/nullĢ. RUN sed -i 's/disable]*=]*yes/disable = no/g' /etc/xinetd.d/rsync # enable rsync Let’s setup a docker Centos 6 container with an installed and configured rsync service. This approach works for many years for other use-cases. You start a rsync server in the container and connect to it using rsync. I tried other solutions but without real success. It tackles the root of the issue: operating on mounted files right now is damn slow. In the beginning it worked but a few days later I got some connection issues between my host and my container.ĭo you know the feeling when you want to fix something but it feels so far away? You realise you don’t understand what’s happing behind the scenes. One important use case is 1-way synchronization.ĭocker-sync supports rsync for synchronization. It’s used for transferring files across computer systems. Rsync initial release was in 1996 (20 years ago). One very mature option is file synchronisation based upon rsync. It’s a ruby application with a lot of options. I made a step back and thought about the root issue again. Or they are unreliable, hard to setup and hard to maintain. Vagrant uses nfs but this is still slow compared to native write and read performance. Either they install extra tools like vagrant to reduce the pain. I tried some solutions but non of them worked for my docker container that contains a big Java monolith. Some of them use nfs, Docker in Docker, Unison 2 way sync or rsync.

Many people created workarounds with different approaches. This is typical of performance engineering, which requires significant effort to analyze slowdowns and develop optimized components. Even if we achieve a huge latency reduction of 100μs/roundtrip, we will still "only" see a doubling of performance. This requires tuning each component in the data path in turn - some of which require significant engineering effort. To reduce the latency, we need to shorten the data path from a Linux system call to OS X and back again. For workloads which demand many sequential roundtrips, this results in significant observable slow down. With osxfs, latency is presently around 200μs for most operations or 20x slower. With a classical block-based file system, this latency is typically under 10μs (microseconds). For instance, the time between a thread issuing write in a container and resuming with the number of bytes written. Latency is the time it takes for a file system system call to complete. With large sequential IO operations, osxfs can achieve throughput of around 250 MB/s which, while not native speed, will not be the bottleneck for most applications which perform acceptably on HDDs. In a traditional file system on a modern SSD, applications can generally expect throughput of a few GB/s. At the highest level, there are two dimensions to file system performance: throughput (read/write IO) and latency (roundtrip time). Additionally, osxfs integrates a mapping between OS X's FSEvents API and Linux's inotify API which is implemented inside of the file system itself complicating matters further (cache behavior in particular). File system APIs are very wide (20-40 message types) with many intricate semantics involving on-disk state, in-memory cache state, and concurrent access by multiple processes. This means that, depending on your workload, you may experience exceptional, adequate, or poor performance with osxfs, the file system server in Docker for Mac. There is a lot of hate so better listen to the “members” instead of reading all the from the Docker for Mac team nailed the issue: Perhaps the most important thing to understand is that shared file system performance is multi-dimensional. This GitHub issue tracks the current state. But the bitter truth is it will take ages. Usually you would work on your source code and expect no slowdowns for building. When you develop a big dockerized application then you are in a bad spot. Let’s compare the results of Windows, Cent OS and Mac OS: Windows 10 100000+0 records in Write random data to a file in this directoryĭocker run -rm -it -v "$(PWD):/pwd" -w /pwd alpine time dd if=/dev/zero of=speedtest bs=1024 count=100000.

We can spin up a container and write to a mounted volume by executing the following commands: The read and write access for mounted volumes is terrible. They fixed many issues, but the bitter truth is they missed something important.

Docker just released a native MacOS runtime environment to run containers on Macs with ease.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed